The Federal Risk and Authorization Management Program (FedRAMP) was established in 2011 as a way for the U.S. government to set a standardized framework and manage security risks when adopting cloud services across its many agencies. As the U.S. government continues to seek modernization of its technology capabilities – the most recent initiative being DISA’s Thunderdome award – cloud service providers (CSPs) are frequently being asked to comply with the FedRAMP baseline.

For the uninitiated, the FedRAMP authorization process is a daunting task, especially when you consider that the FedRAMP Moderate baseline consists of 300+ controls! Many of those controls are written from the perspective of having on-premise infrastructure, which can be confusing for cloud native applications. There’s also a number of audit, continuous monitoring, and reporting requirements that may necessitate process and/or culture changes within an organization.

The benefit, however, is that it becomes much easier to do business with the U.S. government, i.e., sell cloud services to the Pentagon or Department of Energy or CDC. This is because U.S. government agencies can request access to the FedRAMP package of FedRAMP Authorized products. This reuse of audit material reduces the number of vendor assessment questionnaires GRC and product teams need to process. It saves everyone a lot of duplications.

Palo Alto Networks has successfully gone through the FedRAMP authorization process for a number of our products, and we’d like to share a few of the lessons we’ve learned along the way. For InfoSec and IT teams, as well as product developers, SREs, and technology leadership, if you’re looking into FedRAMP certification you’ll want to address the following pain points in advance.

Testing Boundaries

The biggest area I’ve seen product engineering teams struggle with is defining their FedRAMP boundary. The FedRAMP PMO offers boundary guidance. However, it still might not be clear as to what should and shouldn’t be included. When deciding whether something should be included in your FedRAMP boundary, there are two key rules to consider:

- Will that “thing” store, process, and/or transmit data generated by or for a U.S. government customer?

- Does that “thing” affect the CIA triad of other systems/services within your boundary?

The first rule may seem self-explanatory (this is typically your product), but something that’s often missed are the tools used to support your CSO. For example: In order to troubleshoot issues, your customer support organization may need to collect things like logs or packet captures from U.S. government customers, which would bring those supporting tools into the boundary.

The second rule can also be muddy. While your usual suspects like your authentication service, endpoint protection, and firewalls are expected to be in boundary, it may not be obvious that other services such as your CI/CD pipelines, your secrets management tools, and your artifact repositories should also be in boundary. You also need to consider any third-party cloud services, for example Okta, OneLogin or Ping, that are leveraged as part of your CSO or its supporting infrastructure. Those cloud services are also part of your FedRAMP boundary and should be assessed accordingly.

Another boundary issue I’ve seen teams struggle with is: When can we poke holes in the boundary? The general rule is that no U.S. government data can leave the FedRAMP boundary; however, there are exceptions to this if the U.S. government data can be de-identified. There may be several different valid scenarios, such as sharing malicious file hashes from FedRAMP to a commercial environment or getting generic usage analytics for your FedRAMP CSOs.

FIPS Woes

Encryption is another area that may be daunting for engineering teams. There are multiple controls in the FedRAMP baseline that deal with encryption. However, the TL;DR boils down to two key points:

- All data at rest and in transit must be encrypted

- All encryption used in the CSP and its supported infrastructure must leverage FIPS validated cryptography

To meet this requirement, you must be leveraging a FIPS compliant library on a compliant operating system, and that operating system must be running in FIPS mode. For example, enabling FIPS mode on Ubuntu Pro and RHEL is very straightforward.

Sharing is Caring

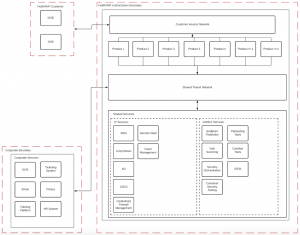

For CSPs looking to bring multiple products through the FedRAMP authorization process, adopting a shared services model for supporting infrastructure will help accelerate the time it takes to become audit ready. Services like CI/CD, secrets management, privileged access management, vulnerability scanning, and audit logging are prime choices for shared services because they can be built once, then reused across all in-scope products. Product teams can then focus on meeting the controls they’re directly responsible for, instead of reinventing the wheel for things like vulnerability scanning, endpoint protection, and SIEM.

An example of shared services design could look something like this:

FedRAMP is a Big Deal

I mean that literally. The Department of Defense, at 2.9 million employees, is one of the largest employers in the U.S. Combining DoD with the remaining federal civilian agencies, it’s not hard to see the potential for massive deals. The opportunities increase even further when you consider that companies in the Defense Industrial Base (DIB) sector are also required to leverage services authorized at FedRAMP Moderate, thanks to DFARS clause 252.204-7012 and the upcoming CMMC program, both of which aim to protect controlled unclassified information (CUI). Protecting CUI, however, is a blog for another day!